Why AI is Redefining how We Move Parts

Robotic workcells are no longer blind, pre-programmed machines limited to repeating a single task. Across factory floors and fulfillment centers, rigid mechanical feeders and hard-coded automation routines are being replaced by systems that perceive, decide, and adapt. At the core of this shift is a new class of robotic intelligence powered by high-resolution 3D vision, real-time inference, and AI models trained on thousands of parts or none at all. Whether anchored to CAD geometry or generalizing from massive datasets, these systems are learning to handle the physical world with a level of precision and flexibility once thought exclusive to human hands.

Two technological architectures now dominate the frontier of robotic perception. One is geometry-first: a robot compares its sensor data against known CAD models, using physical features like edges, holes, and contours as anchors for sub-millimeter pose estimation. These systems are remarkably effective in structured environments, especially where parts are reflective, translucent, or tightly toleranced. The other is data-first, powered by large-scale Robotics Foundation Models trained on diverse visual and interaction data. These systems don’t need to know exactly what an object is; instead, they’ve learned how objects tend to behave, and how they can be reliably grasped, supported, or moved. That generalization makes them uniquely capable in high-SKU environments like e-commerce fulfillment, where today’s inventory wasn’t in the catalog yesterday.

For applied engineers, the implications are immediate: automation is no longer limited to tightly controlled part presentation. At Zaic Design, these capabilities are changing how we approach feeder strategy, changeover planning, and the broader architecture of robotic workcells. What used to require a new fixture or mechanism can now be solved with software, and a smarter camera.

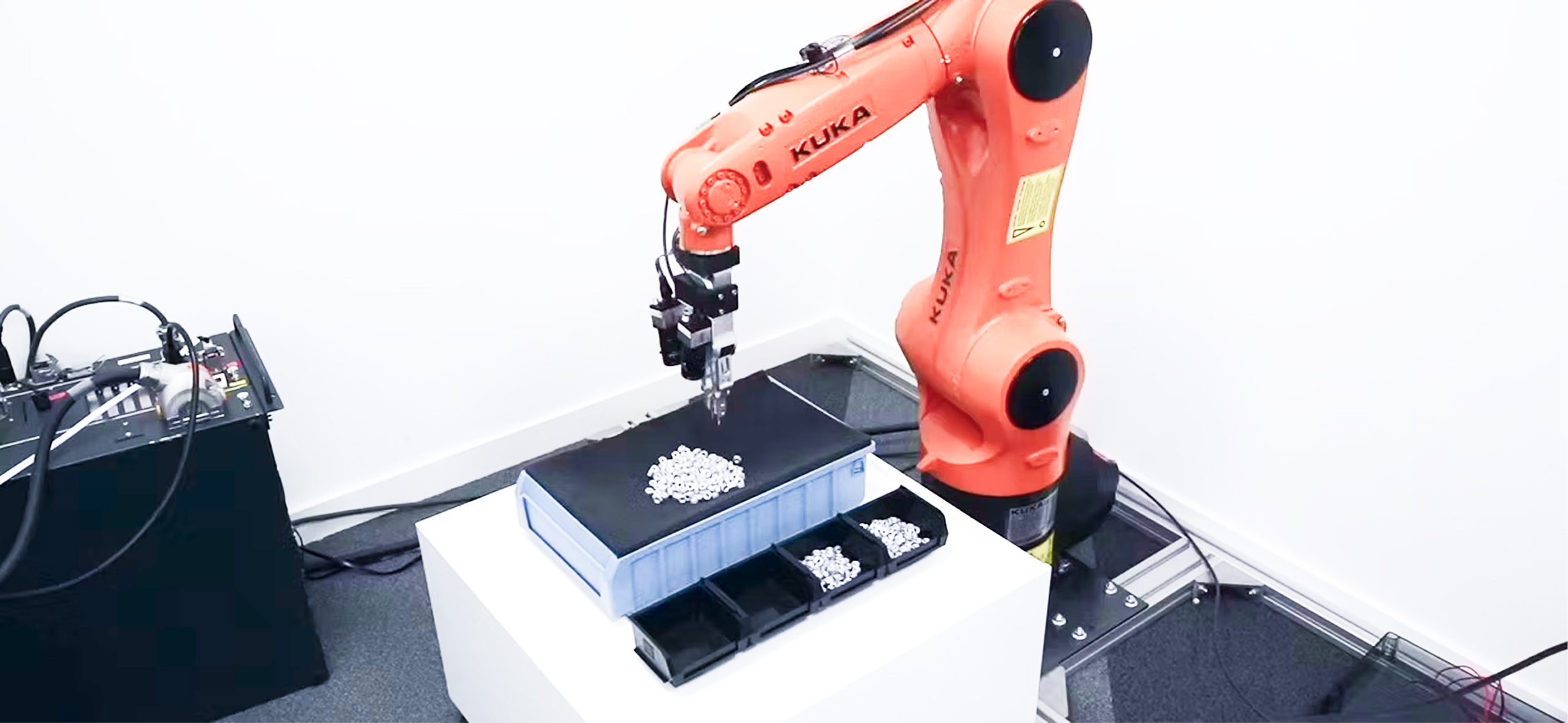

AI 3D vision-guided bottle feeding in production using Cambrian Robotics. Related case study: Revolutionising Production At Kao Corporation through AI 3D vision

Replacing Rigidity: Why AI is Redefining How We Move Parts

To fully appreciate the capabilities of modern AI-powered sorting, it is essential to understand the technological baseline it seeks to displace: the mechanical feeder. For over half a century, the vibratory bowl feeder has served as the backbone of high-volume manufacturing, offering a highly efficient yet fundamentally rigid solution to part singulation.

Limitations of Deterministic Mechanical Automation

Vibratory bowl feeders operate on principles of mechanical resonance, utilizing electromagnetic coils to drive parts up a spiraling, tooled track. The orientation of parts is controlled by mechanical traps and baffles that physically reject items not in the correct alignment. This system is deterministic; it relies on the physical properties of the part (center of gravity, geometry) to ensure singulation.

While vibratory feeders can achieve throughputs exceeding 1,000 parts per minute (PPM) for simple geometries, they suffer from severe inflexibility. A bowl feeder is typically machined to the specific tolerances of a single part design. If the product geometry changes, a frequent occurrence in modern “High-Mix, Low-Volume” (HMLV) manufacturing, the feeder often requires expensive retooling or complete replacement. This results in significant sunk costs and extended downtime during changeovers.

Furthermore, mechanical feeders are “blind.” They do not generate data regarding the quality or quantity of the parts they process. In contrast, AI-driven robotic cells act as edge computing nodes, digitizing the material flow and providing real-time analytics on inventory levels, defect rates, and throughput variability.

Below is an example of 3D vision-guided bin picking in a compact workcell envelope.

AI 3D vision-guided bottle feeding in production using Cambrian Robotics. Related case study: Revolutionising Production At Kao Corporation through AI 3D vision

The Economic Imperative for Flexible Automation

The transition to robotic sorting is driven by a shift in asset management strategy. Unlike dedicated mechanical feeders, robotic cells are “retaskable” assets. The core components: the 6-axis robot arm, the vision controller, and the safety enclosure, can be reprogrammed for entirely different tasks as production needs evolve. This extends the useful life of the capital equipment well beyond the lifecycle of any single product.

The economic analysis suggests that while the upfront Capital Expenditure (CAPEX) for robotics is higher, the Operational Expenditure (OPEX) benefits, driven by reduced changeover times, labor substitution, and asset reuse, often yield a superior Return on Investment (ROI) over a 3-5 year horizon. ↗

Zaic Design also provides a free Automation ROI Calculator you can use to model your baseline costs and test “what-if” automation scenarios using your own assumptions: Try the ROI Calculator.

From Geometry to Semantics: How Robots Understand What They See

The effectiveness of any robotic sorting system starts with perception—its ability to understand what’s in front of it. In bin picking scenarios, that means estimating the precise position and orientation of each object in 3D space: a full six degrees of freedom (6-DoF): X, Y, Z, plus roll, pitch, and yaw. How well a system performs at this task depends heavily on the physics of its sensing method, especially when dealing with difficult surfaces, like shiny metals or transparent plastics, that reflect or distort light in unpredictable ways.

The Physics of 3D Vision Modalities

Modern industrial vision relies primarily on three techniques: Structured Light, Active Stereo Vision, and Time-of-Flight (ToF). Each interacts differently with the optical properties of the target object.

Structured Light

This method involves projecting a known pattern (typically sinusoidal fringes, Gray codes, or phase-shifted patterns) onto the scene. A camera, offset by a known baseline, captures the deformation of this pattern as it drapes over the object’s geometry. The depth is calculated via triangulation. ↗↗

Limitations: Traditional structured light requires the scene to be static during the projection sequence (which may take several frames). If the bin is moving or vibrating, the pattern blurs, resulting in severe depth artifacts.8 Additionally, the projected light can be scattered by reflective surfaces or pass through transparent ones, leading to “no data” voids.

Active Stereo Vision

This technique mimics human binocular vision, using two calibrated cameras to find corresponding feature points in the left and right images. A texture projector is often used to add artificial contrast to featureless surfaces (like smooth plastic), facilitating the stereo matching process. This method is generally more robust to ambient light and motion than pure structured light. ↗↗

Time-of-Flight (ToF)

ToF sensors emit a light pulse and measure the time it takes to return to the sensor. While fast, they often suffer from multipath interference (light bouncing around corners) and low spatial resolution compared to structured light, making them less suitable for precision picking of small parts. ↗

The Challenge of Non-Lambertian Surfaces

A significant percentage of industrial components are non-Lambertian, meaning they do not scatter light uniformly. This presents two primary failure modes for vision systems:

Transparency: Clear plastics and glass allow projected light to pass through to the background. Structured light systems will calculate the depth of the background rather than the object, rendering the part invisible. ToF sensors may receive weak or distorted returns due to refraction. ↗↗

Specularity (Reflectivity): Polished metals act as mirrors. When a laser line is projected onto a chrome cylinder, the camera may see the line reflected elsewhere in the scene (multipath error) or see a saturated “glint” that washes out local pixels. This results in point clouds with holes or spikes, causing the robot to crash or miss the pick. ↗↗

Addressing these physical limitations has led to the divergence of perception architectures into two distinct schools: the geometry-driven approach and the data-driven approach.

How Robots Use CAD for High-Precision Picking

In precision manufacturing environments, such as automotive powertrain assembly or electronics manufacturing, the parts being handled are known in advance. Their dimensions, tolerances, and materials are defined in CAD files. Commercial systems, including Cambrian Robotics, exemplify an architecture that leverages this deterministic data to overcome the limitations of optical physics.

Mechanism of CAD-Based Localization

Unlike systems that rely solely on generating a dense point cloud and then segmenting blobs, CAD-driven perception uses the CAD model as the ground truth. The system’s AI is trained to detect specific geometric features, edges, corners, and primitives, within the 2D stereo images and match them to the 3D wireframe of the CAD model.

This “Sparse-to-Dense” inference method has two practical advantages.

Robustness to Transparency: Even if a transparent part does not reflect structured light, it refracts the background. The CAD-based AI detects the edges of this refraction and the distortion of the background texture. By fitting the known CAD geometry to these visible distortion boundaries, the system can infer the part’s pose with high accuracy, even if the “depth map” is empty. ↗

Handling Reflectivity: Instead of being confused by specular highlights, the system anticipates them. It focuses on stable geometric edges (e.g., the silhouette of a bolt) which remain visible regardless of surface reflection. It treats the CAD model as a template that must be satisfied, filtering out optical noise that doesn’t fit the geometric constraints. ↗

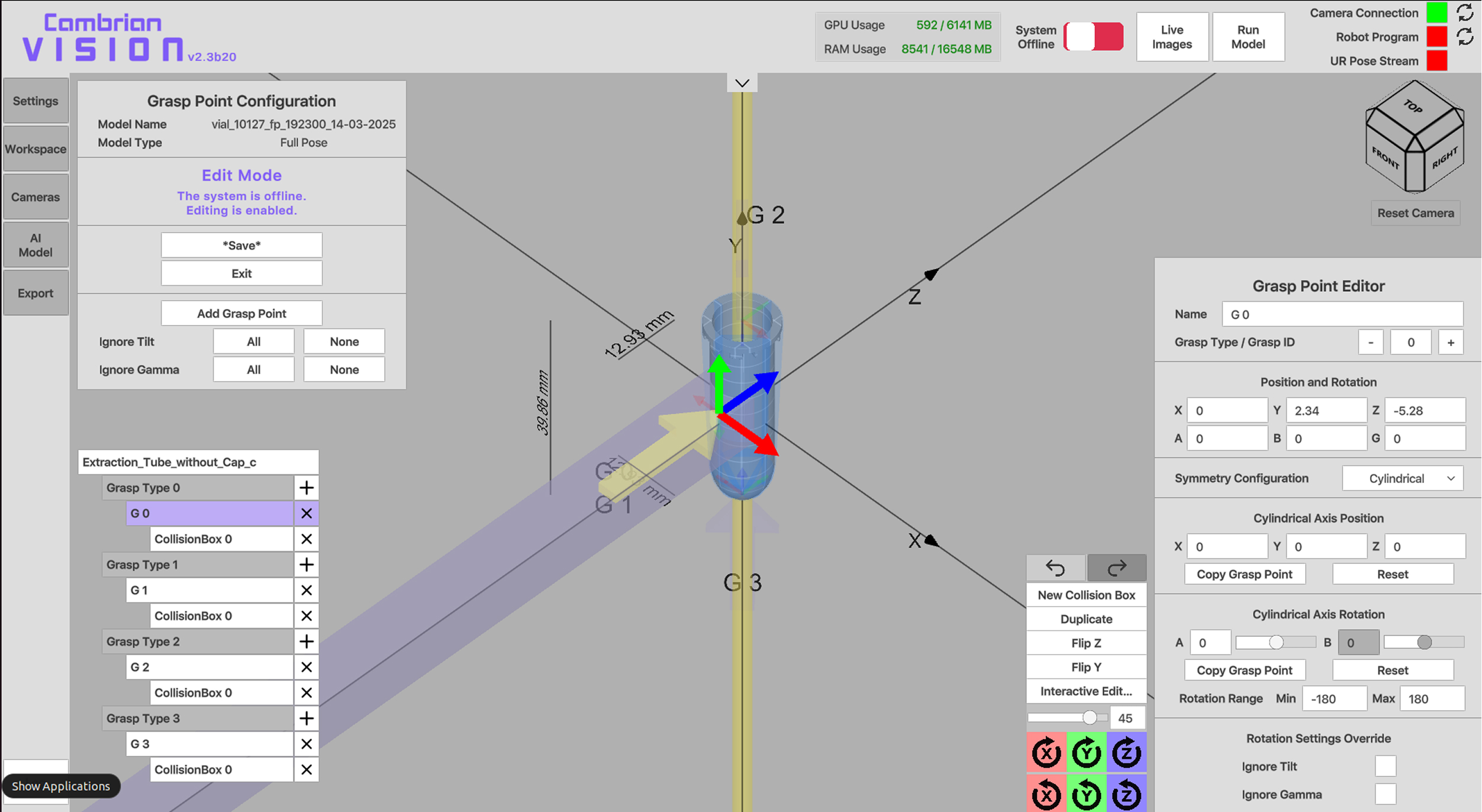

Defining pick points from a CAD file for a transparent part in a Cambrian Robotics Vision System

The “10-Minute” Deployment Workflow

A major innovation in this architecture is the rapid synthesis of training data. In traditional deep learning, training a model to recognize a new part might require thousands of labeled photographs. Cambrian Technologies’ workflow automates this via simulation:

CAD Import: The user uploads standard .obj or .fbx files of the component.

Grasp Definition: Using a GUI, the user defines permissible grasp points directly on the virtual model. This process typically takes under 10 minutes.

Synthetic Training: The system generates synthetic training data by rendering the CAD model in thousands of virtual orientations, lighting conditions, and clutter scenarios. The AI model trains on this synthetic dataset, learning to recognize the part’s geometry invariant of texture or color. ↗

This workflow effectively solves the “Cold Start” problem, allowing for the deployment of robust bin-picking cells without the need for extensive data collection campaigns or physical part availability during the design phase. ↗

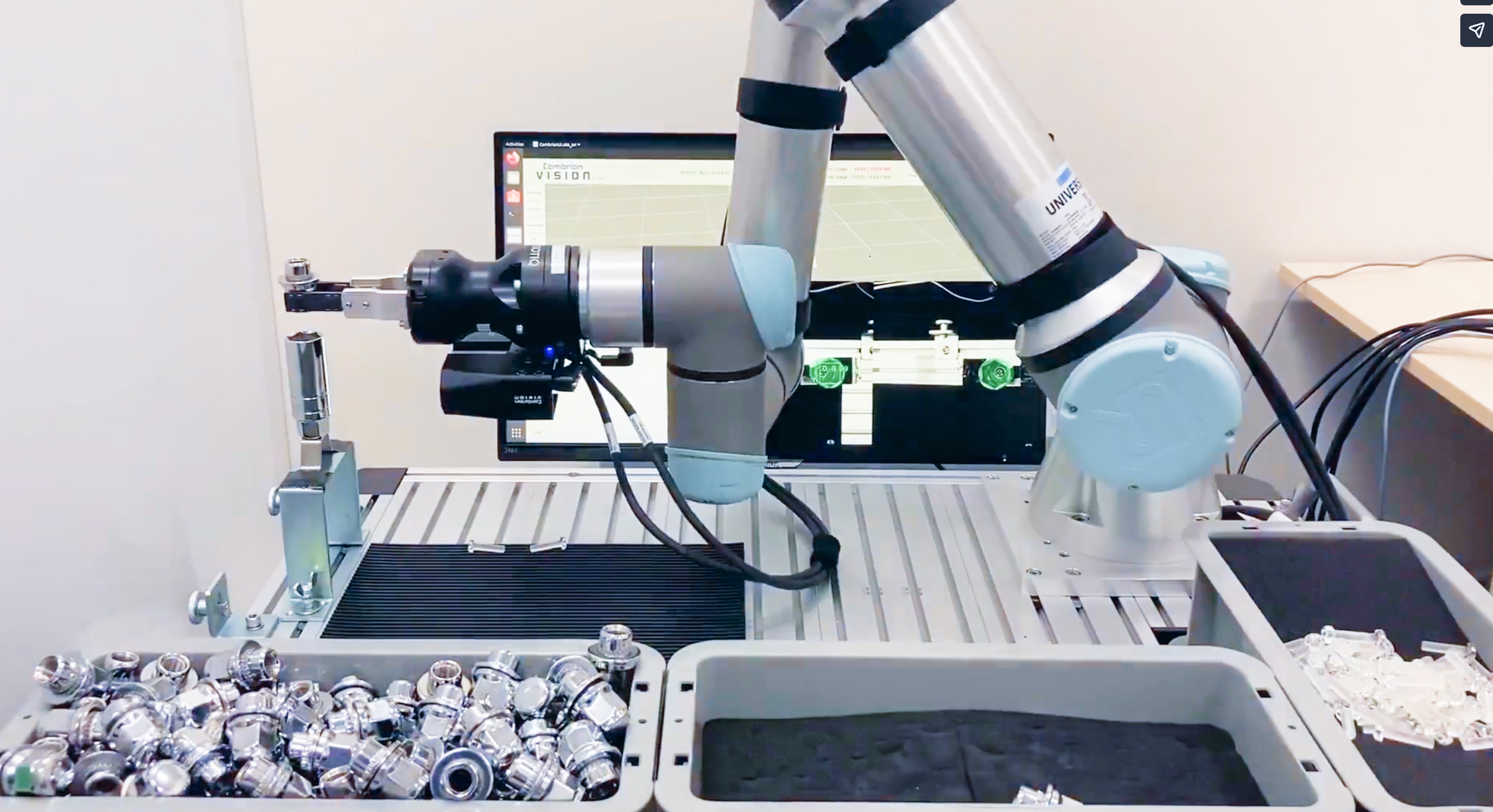

Cambrian Robotics vision integrated with a Universal Robots arm performing bin pick, 6-DoF pose alignment, and oriented placement across multiple part types. (Watch the video)

If you’re interested in exploring CAD-driven bin picking for your operation, contact Mark at The Knotts Company to arrange a demonstration of the Cambrian Robotics system.

The Data-First Approach: Generalization in the Wild

While CAD-based systems excel in structured manufacturing, the logistics and e-commerce sectors face a different challenge: the “Unknown Object” problem. A fulfillment center may handle millions of SKUs, with new items introduced daily. Maintaining a CAD library for every toothbrush, t-shirt, and toy is operationally impossible. This necessitates Robotics Foundation Models (RFMs), which leverage the scaling capabilities of Transformer architectures to achieve generalized robotic intelligence.

Covariant RFM-1: Tokenizing the Physical World

Covariant has pioneered the RFM-1 (Robotics Foundation Model 1), an 8-billion parameter model designed to provide robots with “human-like reasoning” capabilities. RFM-1 represents a departure from task-specific Convolutional Neural Networks (CNNs) toward a generative, multimodal architecture. ↗↗

The Architecture of Physical Tokenization

RFM-1 treats robotic interaction as a sequence modeling problem, analogous to how Large Language Models (LLMs) predict the next word in a sentence. The model ingests diverse data streams, camera pixels, joint angles, gripper status, and text commands, and abstracts these sensor and command inputs into a unified control vocabulary within a shared embedding space. It then performs autoregressive “next-token prediction” to generate the sequence of robot actions required to achieve a goal.

The Physics World Model

A defining capability of RFM-1 is its ability to simulate physical interactions. The model can generate short video sequences predicting the outcome of an action before it happens. For example, if tasked with picking a deformable bag of flour, RFM-1 can “imagine” how the bag will sag and deform under gravity. This internal physics simulator allows the robot to evaluate multiple grasp strategies in its “mind” and select the one with the highest probability of success, significantly improving reliability on novel, deformable objects.

Vision-Language-Action (VLA) Models

The integration of natural language processing with robotic control has given rise to Vision-Language-Action (VLA) models. These systems enable semantic tasking, allowing human operators to control robots using high-level commands rather than explicit code.

Semantic Understanding: A VLA model can process a command like “Pick the damaged red box.” To execute this, the model must understand the visual concept of “red,” the semantic concept of “damaged” (e.g., recognizing crushed corners or torn packaging), and map these to a specific manipulation policy. This capability is crucial for exception handling and quality control in unstructured environments. ↗

Zero-Shot Generalization: VLAs leverage pre-trained knowledge from internet-scale image-text datasets (like CLIP or SigLIP) to recognize objects they have never seen before. This allows a robot to pick a specific brand of cereal solely based on its textual description, without any prior training on that specific box. ↗↗

Training in the Cloud, Working on the Floor: Closing the Sim-to-Real Gap

Training large-scale models like RFMs entirely in the physical world is prohibitively slow and expensive. Collecting the millions of grasp attempts required for convergence would take decades of robot time. Consequently, the industry relies heavily on Sim-to-Real transfer, using high-fidelity physics simulators to train the “brain” before deploying it to the “body.”

Domain Randomization Strategies

The primary obstacle in Sim-to-Real transfer is the “Reality Gap”, the subtle discrepancies between the simulated environment and the physical world (e.g., friction coefficients, sensor noise, lighting variations). Ambi Robotics and Plus One Robotics employ Domain Randomization to bridge this gap.

In this approach, the simulation does not attempt to perfectly mimic reality. Instead, it randomizes environmental parameters to extreme degrees during training.

Visual Randomization: The textures of objects, the color of the bin, and the position of light sources are randomized wildly (e.g., training a robot to pick parcels in a disco-lit room).

Physical Randomization: by training on this distribution of “extreme” environments, the AI learns robust features that are invariant to environmental noise. When deployed to the real world, the robot perceives the physical environment as just another variation within its training distribution. ↗

Generative Simulation and SplatSim

Advanced Sim-to-Real pipelines are now incorporating Gaussian Splatting and Neural Radiance Fields (NeRFs) to create photorealistic training environments. SplatSim is a framework that allows a robot to scan a real-world object once, and then generate thousands of photorealistic synthetic views of that object in the simulator. This allows for the rapid creation of “Digital Twins” of customer inventory, enabling the robot to practice on the customer’s specific SKUs in the cloud before the hardware is even installed. ↗

Designing for the Unexpected: Recovery, Redundancy, and Real-Time Feedback

Operational reliability in robotics is defined not by success rates in ideal conditions, but by the system’s ability to recover from failure. “Corner cases” such as double picks, entanglements, and occlusions are the primary drivers of downtime.

The “Double Pick” Anomaly

In logistics, a frequent failure mode is the “double pick,” where a robot unintentionally grabs two items at once (e.g., two polybags stuck together by static, or a porous item lifting the one beneath it). If undetected, this leads to inventory inaccuracies downstream.

Detection: Advanced systems utilize a multi-stage validation process. After a pick, the robot may perform a “validation scan” in front of a secondary camera, or use force/torque sensors in the wrist to detect if the payload mass exceeds the expected SKU weight. ↗

Entanglement and De-Clumping Strategies

Industrial components such as springs, wire harnesses, or connecting rods are prone to entanglement. A standard pick attempt often results in lifting a cluster of parts, triggering a collision or drop error.

AI-Driven Separation: Research into PickNet and PullNet architectures enables robots to recognize entanglement patterns. The AI can generate “separation primitives”, specific maneuvers to untangle items. This might involve “stirring” the bin (using the gripper to agitate the pile) or a “lift-and-drop” strategy where a cluster is raised and dropped from a calculated height to break the mechanical interlock.

Mechanical/Hybrid Solutions: Miso Robotics employs a hybrid approach in their automated kitchen assistants. Their system uses pneumatic actuators to physically shake the basket, effectively “de-clumping” food items before the vision system attempts to identify individual targets. This combination of mechanical agitation and AI perception is often more time-efficient than complex robotic manipulation alone.

Lighting Interference and Environmental Robustness

Warehouses are notoriously difficult optical environments. Skylights causing shifting sunbeams, flickering LED overheads, and shadows can wreak havoc on vision sensors.

Hardware Robustness: Modern sensors utilize High Dynamic Range (HDR) and narrow-band spectral filters (matched to the laser projector’s wavelength) to optically reject ambient light.

Algorithmic Robustness: Geometry-based perception can be inherently robust to lighting changes, as the physical edges of a part do not change with illumination. Simulation-driven approaches also address this by training across broad lighting variation so models learn to ignore shadows and glare.

Tactile Sensing and “Blind” Manipulation

When vision fails due to occlusion or extreme lighting, tactile sensing becomes the fallback.

Visuotactile Integration: The AnyRotate framework demonstrates how tactile sensors on robotic fingertips can be used to manipulate objects even when they are occluded from the camera. By sensing contact forces and slippage, the robot can perform “in-hand manipulation” (rotating a part to a better orientation) without relying on visual feedback. This closes the loop between perception and action, allowing for “blind” reliability. ↗

Beyond Labor Replacement: Robotics as a Strategic Asset

The adoption of AI robotic sorting is not merely a technical decision but a strategic response to macro-economic pressures. The Return on Investment (ROI) calculation has evolved from a simple labor-substitution model to a broader analysis of supply chain resilience.

For readers evaluating whether this kind of system pencils out in their own operation, Zaic Design provides a free ROI calculator that lets you model baseline costs and “what-if” automation scenarios and then estimate payback: Try the ROI Calculator

ROI and Labor Dynamics

The primary driver for automation is the chronic labor shortage in the logistics sector. With turnover rates in warehouses often exceeding 100%, the cost of constantly recruiting and training new staff is substantial.

Labor Substitution: A single robotic cell typically replaces 1.5 to 2 Full-Time Equivalents (FTEs) per shift. In a 24/7 operation (3 shifts), one robot can replace up to 6 salaries, yielding a rapid payback period.

Robots-as-a-Service (RaaS)

To mitigate the high initial CAPEX of robotic systems ($100k+), vendors like Covariant, Ambi, and Nimble have popularized the Robots-as-a-Service (RaaS) business model.

Model Structure: Instead of purchasing the hardware, the customer pays a monthly subscription fee or a “price per pick.” This shifts the cost from CAPEX to OPEX.

Incentive Alignment: RaaS aligns the vendor’s incentives with the customer’s success. Since the vendor is responsible for maintenance and only gets paid for successful picks, they are driven to continuously update the software and improve the “Data Moat” to maximize system uptime.

What’s Next: Toward Fully Generalized Robotic Workcells

The current state-of-the-art relies on a modular stack: Perception → Planning → Control. The future of robotic sorting lies in End-to-End Learning, where these distinct modules merge into a single, cohesive policy.

Visuomotor Policies

Emerging research into Large Physical Models suggests that robots will soon utilize “visuomotor policies” where raw camera pixels are mapped directly to motor torques. This mimics biological motor control, bypassing the need for explicit object detection or trajectory planning steps. Such systems promise “superhuman” dexterity, allowing robots to react to slips or dynamic changes in microseconds. ↗

The Data Flywheel and General Purpose Robots

As fleets of robots from companies like Covariant and Plus One continue to operate, they feed a “Data Flywheel.” Every corner case encountered by a robot in one facility is uploaded, learned, and propagated to the entire fleet. This accumulating “Data Moat” is driving the industry toward a General Purpose Robot, a system capable of walking into any warehouse, identifying any item, and performing any task with zero deployment time, capable of taking on new tasks without retraining or human-coded integration steps. ↗

Some Thoughts

The integration of CAD-driven perception for precision manufacturing and Robotics Foundation Models for generalized logistics has fundamentally solved the “unstructured bin picking” problem. The convergence of these technologies, supported by advanced hardware and Sim-to-Real transfer, has transformed robotic sorting from an experimental novelty into the critical infrastructure of the modern supply chain. The industry is now entering a phase of rapid scaling, where the competitive advantage will belong to those who can most effectively leverage these intelligent, adaptive assets.

Our commitment to innovation and user-centered design makes Zaic Design a trusted partner for companies seeking to develop cutting-edge consumer products that seamlessly integrate technology, comfort, and functionality.

With expertise in product design, material selection, prototyping, and iterative engineering, we specialize in overcoming complex challenges in consumer device development. Our capabilities in automation integration allow us to offer scalable solutions that enhance both product development and manufacturing processes.

Whether you’re looking to advance your product line with innovative designs or explore automation solutions to streamline production, Zaic Design has the technical acumen and creativity to drive measurable success. Our automation expertise extends beyond production line enhancements, offering the potential to integrate automation into your product designs themselves, paving the way for smarter, more efficient devices.

Schedule your free consultation today and discover how Zaic Design can revolutionize your product development and manufacturing processes with industry-leading design and automation solutions tailored to your needs.

Talk with us!

Fill out the form below and one of our experts will get in touch with you shortly.

0 Comments